Overview

A leading marketing advisory specializing in wealth management and investor communications was running a core generative AI capability for AI powered, FINRA and SEC compliant email generation on OpenAI GPT models. While the solution delivered early value, growing concerns around data security, compliance governance, escalating API costs, and single vendor dependency created an urgent need to migrate to a more enterprise grade and sovereign AI stack.

42%

Reduction in AI Infrastructure Costs

60%

Faster Compliant Content Generation

50%

Reduction in Compliance Review Cycles

Customer Challenges

The organization had invested significantly in building an AI email generation system tuned to OpenAI's GPT models. As the solution matured, a set of compounding challenges made it clear that the existing architecture was not sustainable for a regulated financial environment.

Data Security & Regulatory Exposure

Sending sensitive investor data and proprietary financial content to a third-party API operated outside the firm's cloud perimeter introduced unacceptable data residency and privacy risks. Meeting evolving FINRA and SEC data governance expectations required all AI workloads to operate within VPC-isolated, enterprise-controlled environments, a standard OpenAI's API model could not satisfy.

Escalating Costs and Unpredictable Pricing

OpenAI's token-based pricing, combined with high-volume email generation at scale, resulted in rapidly increasing infrastructure costs with limited control or predictability. The lack of committed pricing arrangements and the inability to optimize model selection per task drove a need for a more cost-efficient, multi-model AI infrastructure.

Prompt Library Degradation Risk During Migration

The firm's compliance email generation system relied on a carefully engineered library of LLM prompts, each optimized for GPT model behavior. Migrating to a different model family without a structured, evaluation-driven approach risked significant performance degradation across agents, workflow steps, and compliance validation logic, a risk that could not be absorbed in a regulated environment.

Single-Vendor Lock-In and Limited Governance

Reliance on a single external AI vendor created strategic fragility. The organization lacked the ability to switch models as the AI landscape evolved, negotiate competitive pricing, or enforce enterprise-grade audit trails and approval workflows on content generated through third-party APIs.

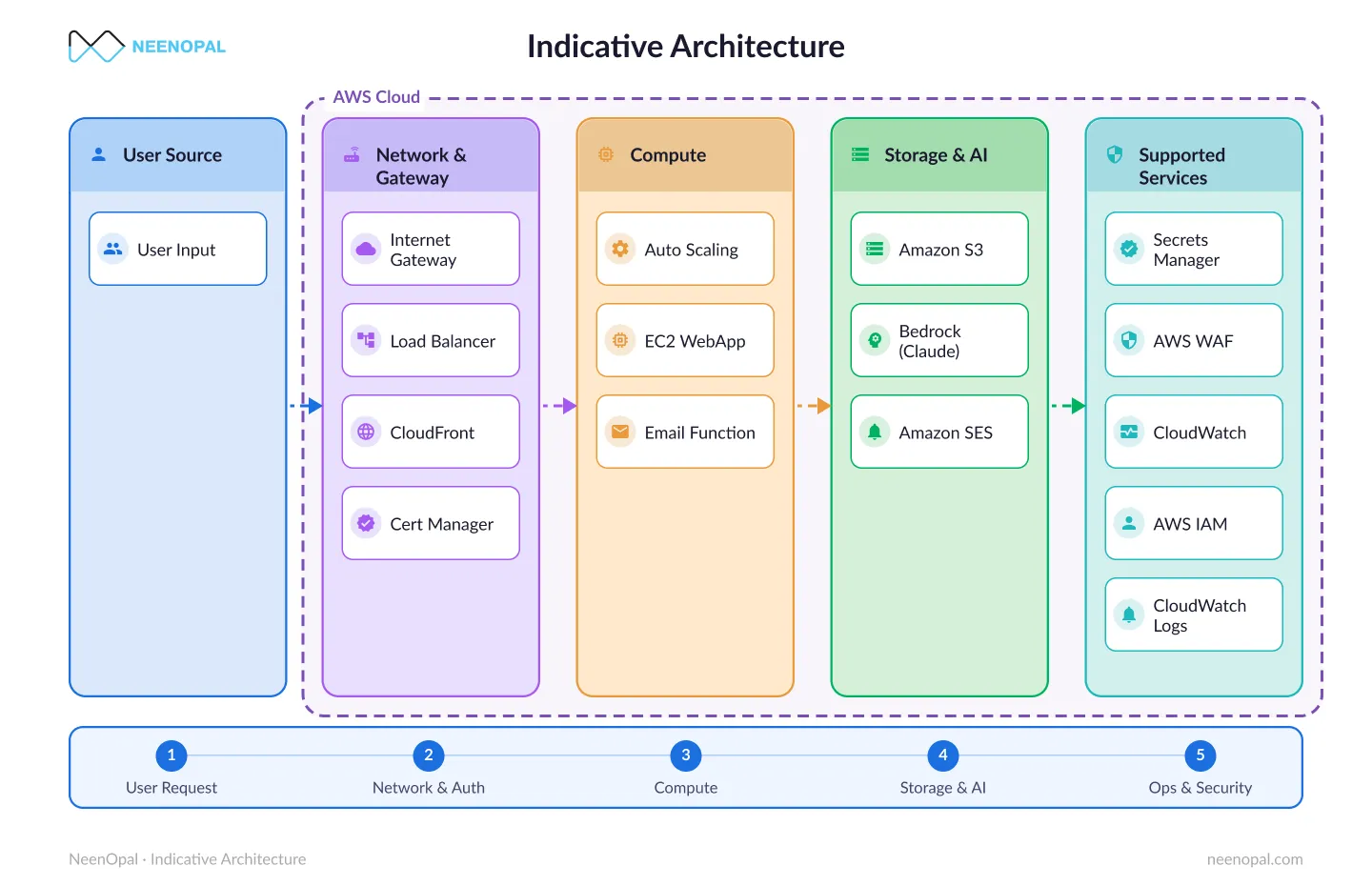

Secure GenAI Architecture on AWS

This architecture shows how the organization migrated its AI email generation system from OpenAI GPT to Amazon Bedrock, using Claude models for content generation and Amazon OpenSearch-powered RAG pipelines for real-time compliance validation, enabling secure, scalable, and enterprise-ready AI operations.

Solutions

NeenOpal applied its structured LLM Migration Framework, a five-phase, evaluation-driven methodology, to migrate the firm's entire GenAI stack to Amazon Bedrock, while simultaneously upgrading the compliance validation architecture with OpenSearch-powered RAG pipelines.

01.

Structured Prompt Audit & Migration Framework

NeenOpal conducted a complete audit of the firm's prompt library, cataloguing every prompt across agents, workflow steps, and compliance pipelines. Each prompt was mapped to its GPT-specific behavioral dependencies. Prompts were then systematically re-engineered for Claude and Titan models on Amazon Bedrock, preserving original compliance intent while leveraging the new models' architectural strengths. A comprehensive evaluation baseline was constructed from production logs, enabling objective quality benchmarking throughout the migration.

02.

Amazon Bedrock with Claude for Compliant Content Generation

The core AI email generation engine was rebuilt on Amazon Bedrock using Claude models for natural language generation. The AWS-native stack enabled VPC-isolated inference, ensuring all AI processing remained within the firm's secure cloud boundary. Native AWS IAM integration provided granular access controls and audit-ready logging at every generation event.

03.

OpenSearch-Powered RAG Pipeline for Real-Time Compliance Validation

FINRA and SEC compliance rules were indexed as vector embeddings in Amazon OpenSearch. At generation time, each email draft was evaluated against the compliance rule set through a Retrieval-Augmented Generation (RAG) pipeline, flagging violations before content ever reached a compliance officer. This automated pre-screening dramatically reduced manual review burden and accelerated approval cycle times.

04.

Zero-Downtime Cutover with Full Compliance Parity

The migration was executed using a side-by-side validation approach, running new Bedrock-powered prompts in parallel with the legacy GPT stack. Evaluation scores were benchmarked against the existing production baseline, and stakeholder sign-off was obtained at each phase gate before progressive traffic cutover. The firm experienced zero production downtime and maintained uninterrupted compliance workflows throughout the migration.

Secure, compliant LLM migrations with proven AWS expertise

Talk to an ExpertServices

Benefits

Significant and Sustainable Cost Reduction

By replacing OpenAI's API-based pricing with AWS-native Bedrock model inference, the organization achieved a 42% reduction in AI infrastructure costs. The ability to select the right model for each task, balancing cost, latency, and capability, unlocked ongoing optimization opportunities unavailable under a single-vendor model.

Enterprise-Grade Security and Compliance Posture

All AI workloads now operate entirely within VPC-isolated AWS environments, eliminating data residency concerns and satisfying FINRA and SEC data governance requirements. Native AWS audit logging and IAM-enforced access controls provide the traceability and governance infrastructure required for regulatory examinations and internal compliance reporting.

Faster Content Generation with Automated Compliance Pre-Screening

The OpenSearch-powered RAG compliance validation pipeline reduced compliance review cycles by 50% by automatically flagging rule violations prior to human review. Compliant content generation is now 60% faster end-to-end, enabling marketing and compliance teams to operate at greater velocity without increasing compliance risk.

Vendor-Agnostic, Future-Proof AI Architecture

The re-engineered prompt library and evaluation framework give the firm the ability to adopt future AWS Bedrock model updates, or any next-generation LLM, without rebuilding from scratch. The organization is no longer subject to OpenAI pricing decisions or roadmap changes, and has established the internal capability to migrate models as the AI landscape evolves.

Conclusion

This engagement demonstrates that LLM migration, when executed with the right methodology is not simply a cost exercise. It is a strategic enabler. By partnering with NeenOpal, this financial services firm transformed a fragile, third-party-dependent AI capability into a compliant, cost-efficient, and future-ready enterprise asset built on Amazon Bedrock. For organizations operating in regulated environments where data security, compliance governance, and production reliability are non-negotiable, NeenOpal's LLM Migration Framework offers a proven, structured path to model-agnostic AI that delivers measurable business and technology outcomes at every stage.

FAQ

Here are some common questions about LLM migration, compliance-ready AI architectures, and the transition from OpenAI GPT to Amazon Bedrock:

Why migrate from OpenAI to Amazon Bedrock?

Organizations migrate from OpenAI GPT to Amazon Bedrock to gain stronger security controls, operate AI workloads within their cloud environment, and optimize infrastructure costs.

What are the benefits of Amazon Bedrock for regulated industries?

Amazon Bedrock enables secure, VPC-isolated AI workloads with native AWS governance controls, making it suitable for industries that must comply with strict regulations such as finance and healthcare.

How does Retrieval-Augmented Generation improve compliance workflows?

A RAG pipeline uses search systems like Amazon OpenSearch to retrieve relevant regulatory rules and validate AI-generated content in real time, helping organizations detect compliance issues before human review.

Contact Us

We’d love to hear from you.

Lets discuss how we can transform your business with AI. Talk to our AI expert team. Lets do AI journey together.